Top Guardian Agents for Enterprise AI Security (2026): Key Platforms, Features & Deployment

Key Takeaways

What Are Guardian Agents for Enterprise AI Security?

Guardian agents are security control systems that monitor and govern enterprise AI agents, copilots, and LLM applications at runtime. They supervise how AI systems interact with prompts, data, tools, and APIs to ensure activity aligns with organizational security policies.

Unlike traditional monitoring tools that analyze events after they occur, guardian agents inspect AI activity in real time. They evaluate prompts and outputs, detect risky behavior, and enforce policy boundaries that prevent sensitive data exposure or unauthorized system access.

6 Top Guardian Agent Platforms for Enterprise AI Security in 2026

The guardian agent market is emerging quickly, with platforms focused on runtime security, governance, observability, and agent oversight for enterprise AI. The following tools represent leading platforms in this space:

1. Opsin

Opsin is an enterprise AI security platform designed to discover, monitor, and govern how AI agents, copilots, and generative AI applications interact with enterprise data. The platform provides continuous visibility into AI usage across SaaS environments and internal systems, helping security teams identify oversharing risks, unsafe prompts, and policy violations in AI interactions. By monitoring prompts, responses, and data flows in runtime, Opsin helps organizations maintain control over how AI tools access and expose sensitive information.

Key capabilities:

- Discovery and inventory of enterprise AI agents, copilots, and GenAI applications

- Continuous monitoring of prompts, outputs, and data access across AI tools

- Detection of oversharing, sensitive data exposure, and risky AI interactions

- Policy-driven controls to identify and investigate AI misuse or unsafe behavior

Best for: Enterprises adopting generative AI, copilots, and internal AI agents that require visibility into AI activity and protection against sensitive data exposure. Enterprises heavily invested in Microsoft 365 or Google Workspace that require deep visibility into how Copilots and agents access sensitive "overshared" data.

2. ServiceNow AI Control Tower

ServiceNow AI Control Tower is a centralized governance platform that helps enterprises oversee, manage, and secure AI systems across their organization. Built on the ServiceNow platform, it provides visibility into AI models, agents, and workflows while helping organizations track AI initiatives, enforce governance policies, and align AI deployments with enterprise risk and compliance frameworks.

Key capabilities

- Centralized visibility into enterprise AI models, agents, and initiatives

- AI inventory and lifecycle management across internal and third-party AI systems

- Governance controls to align AI deployments with organizational policies

- Integration with ServiceNow workflows for oversight and operational management

Best for: Enterprises using ServiceNow that want centralized governance and oversight of AI initiatives across business workflows.

3. Credo AI

Credo AI is an enterprise AI governance platform that helps organizations manage risk, compliance, and oversight across their AI systems. The platform provides centralized visibility into AI models, applications, and use cases across the enterprise, allowing teams to document AI deployments, assess governance requirements, and align AI initiatives with regulatory and organizational policies.

Key capabilities

- Centralized registry and inventory of enterprise AI systems and use cases

- Governance workflows to assess risk and track responsible AI practices

- Policy Packs that map AI deployments to regulatory frameworks and internal policies

- Reporting and documentation to support regulatory and compliance requirements

Best for: Enterprises and regulated industries that need structured governance and compliance oversight for AI initiatives.

4. Lakera Guard

Lakera Guard is a runtime security platform designed to protect applications that use large language models. The platform sits between users and AI models, inspecting prompts and model outputs in real time to detect threats such as prompt injection, malicious instructions, and sensitive data leakage. By acting as a protective layer for AI interactions, Lakera Guard helps organizations enforce policies and prevent unsafe responses before they reach users or downstream systems.

Key capabilities

- Real-time detection of prompt injection and adversarial inputs

- Inspection and filtering of prompts and model responses before execution

- Protection against sensitive data leakage and unsafe outputs

- API-based integration with enterprise LLM applications and AI workflows

Best for: Enterprises deploying LLM-powered applications or AI copilots that require runtime protection against prompt attacks and unsafe model behavior.

5. LangSmith

LangSmith is an LLM observability and evaluation platform developed by LangChain to help teams build, test, and monitor AI applications and agent workflows. The platform provides detailed tracing of prompts, responses, and tool calls, allowing developers to understand how LLM applications behave during development and in production. By capturing interaction logs and evaluation data, LangSmith helps teams debug issues, test prompts, and improve the reliability of AI-powered applications.

Key capabilities

- End-to-end tracing of prompts, responses, and agent tool calls

- Debugging and monitoring of LLM and agent workflows

- Evaluation tools for testing prompts and model outputs

- Observability dashboards for analyzing performance and failures

Best for: Development teams building LLM applications or AI agents that need observability, debugging, and evaluation tools to improve application reliability.

6. Arize Phoenix

Arize Phoenix is an open-source LLM observability and evaluation platform developed by Arize AI. It helps teams monitor, analyze, and debug large language model applications and AI agents by capturing detailed traces of prompts, responses, and system behavior. The platform provides visibility into how AI systems perform in production, allowing developers to investigate failures, evaluate model outputs, and improve the reliability of AI applications.

Key capabilities

- End-to-end tracing of prompts, responses, and agent interactions

- Evaluation tools for analyzing LLM outputs and model performance

- Debugging workflows to investigate failures and unexpected responses

- Observability dashboards to monitor LLM application behavior

Best for: Engineering teams building LLM applications or AI agents that require open source observability and evaluation tools to analyze model behavior and improve performance.

Key Features of Guardian Agent Platforms

Guardian agent platforms provide capabilities that help organizations monitor AI behavior, enforce governance policies, and maintain visibility into AI systems operating across enterprise environments.

Types of Guardian Agents in Enterprise AI Security

Guardian agents operate at different points in AI workflows to control specific risks. The following types represent the most common roles used to supervise enterprise AI systems.

- Runtime Policy Enforcement Agents: enforce security and governance policies while AI systems are running. They ensure AI actions follow predefined rules related to data access, system interactions, and organizational compliance requirements.

- Input and Output Validation Agents: inspect prompts entering an AI system and responses generated by the model. Their role is to detect unsafe instructions, malicious inputs, or outputs that may expose sensitive information or violate policy.

- Runtime Behavior Monitoring Agents: continuously monitor how AI systems behave during operation. They track prompts, decisions, and tool usage to identify anomalies, unexpected behavior, or policy violations.

- Multi-Agent Orchestration Guardrails: supervise workflows where multiple AI agents interact with each other. They ensure agents follow defined workflows and prevent cascading errors, unsafe actions, or uncontrolled task execution.

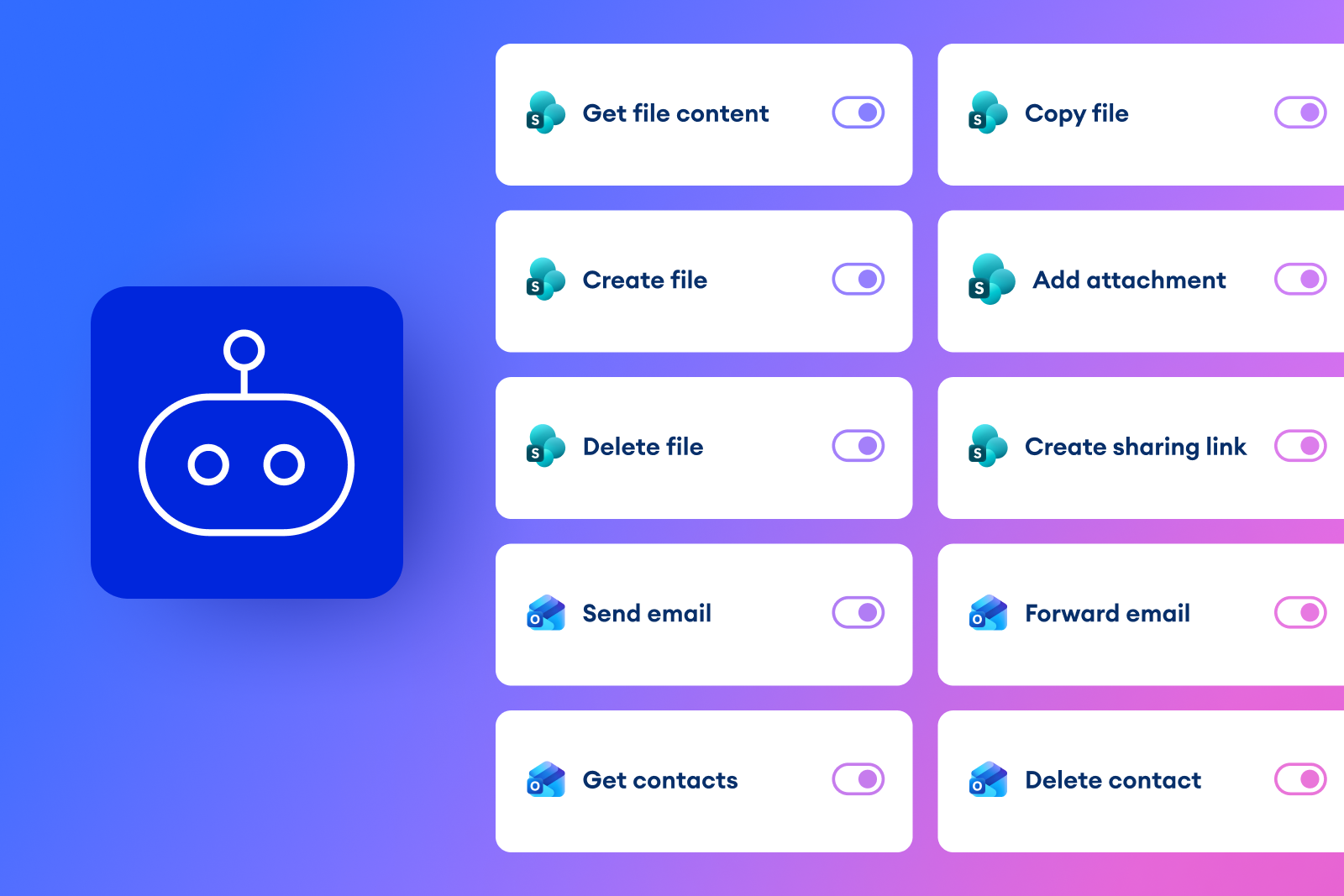

- API and Tool Access Control Agents: manage how AI systems access external tools, APIs, and enterprise services. They verify permissions, restrict unauthorized actions, and control how AI agents interact with connected systems.

Real-World Use Cases for Guardian Agents in Enterprise AI

Guardian agents supervise how AI systems operate in production environments. Common use cases show how these controls help manage risk and maintain visibility across enterprise AI deployments.

Protecting Copilot and ChatGPT Enterprise Deployments

Organizations increasingly deploy enterprise copilots and generative AI assistants in internal workflows. Guardian agents monitor prompts and responses to detect unsafe instructions, policy violations, or attempts to extract sensitive company data.

Governing Custom AI Agents and Copilots

Enterprises building internal AI agents require controls to ensure those systems operate within defined policies. Guardian agents supervise these deployments by monitoring agent actions, system access, and automated task execution.

Preventing Prompt-Based Data Exposure

Improper prompts can cause AI systems to reveal confidential information or generate unsafe responses. Guardian agents inspect prompts and outputs to detect potential data leakage and block responses that violate security policies.

Monitoring Tool Use and Sensitive Data Access

Many AI agents interact with APIs, internal systems, and enterprise data sources. Guardian agents track these interactions to ensure agents only access approved tools and prevent unauthorized exposure of sensitive information.

How to Evaluate Enterprise Guardian Agent Platforms

When evaluating guardian agent platforms, focus on visibility into AI behavior, policy enforcement, and scalability. The criteria below outline the key factors for production readiness:

Guardian Agent Deployment Process Step by Step

Deploying guardian agents requires a structured approach that aligns AI oversight with enterprise security and governance controls. This process typically includes the steps outlined here.

- Identify High-Risk AI Workflows: Determine where AI agents interact with sensitive data, internal systems, or external APIs. Prioritizing these workflows helps organizations focus protection on areas with the highest security and compliance risk.

- Define Runtime Policies: Establish rules that govern how AI agents can access data, execute actions, and generate responses. These policies define acceptable behavior and operational boundaries for AI systems.

- Integrate With AI and Security Infrastructure: Connect the guardian platform with AI applications, data sources, and monitoring systems so AI activity can be observed and governed across the environment.

- Test Against Adversarial Scenarios: Simulate prompt injection, data leakage attempts, and other attack patterns to evaluate how AI systems and guardian controls respond under realistic conditions.

- Monitor and Refine Controls: Continuously review AI activity, alerts, and policy outcomes. Adjust monitoring rules and governance policies as AI usage evolves.

Trade-Offs and Implementation Challenges of Guardian Agents

Guardian agents improve visibility and control over enterprise AI, but their implementation introduces operational and architectural challenges.

- Added Architectural Complexity: Introducing a guardian layer adds another component to the AI stack. Organizations must design how this layer interacts with AI models, applications, and monitoring systems without disrupting existing workflows.

- Integration Effort With Existing Security Stacks: Guardian platforms often need to connect with existing tools such as logging systems, governance platforms, or security monitoring solutions. Integrating these systems can require additional configuration and operational planning.

- Potential Performance Overhead: Inspecting prompts, responses, or agent actions can introduce additional processing steps. In high-volume AI environments, organizations must ensure monitoring controls do not significantly impact application latency.

- Ongoing Policy and Governance Management: Guardian agents rely on defined policies and rules to supervise AI systems. Security and governance teams must continuously review and update these policies as AI deployments expand or new risks emerge.

Best Practices for Implementing Guardian Agents in Enterprise AI

These best practices help organizations deploy guardian agents while maintaining visibility, policy control, and operational stability.

Why Opsin Leads in Runtime Guardian Agent Protection

Opsin focuses on protecting enterprise AI deployments at runtime, where prompts, AI responses, and data interactions create real security risks. The platform provides visibility, monitoring, and policy enforcement to help organizations govern how AI agents and copilots operate across enterprise systems.

- Discovery of Enterprise AI Agents and Copilots: Opsin helps organizations identify AI copilots, agents, and generative AI applications operating across the enterprise. This visibility allows security teams to understand where AI interacts with sensitive systems and data, often beginning with an AI readiness assessment to evaluate enterprise AI exposure.

- Continuous Monitoring of Prompts, Data Exposure, and Agent Risk: The platform continuously monitors prompts, responses, and AI interactions to detect risky behavior such as sensitive data exposure or unsafe prompt activity. These capabilities are supported through Opsin’s AI detection and response approach, which helps teams investigate and manage AI-related risks.

- Policy-Driven Detection of Misuse and Oversharing: Opsin applies policy-driven rules to detect misuse of AI tools and prevent oversharing of confidential information through prompts or responses. This oversight supports enterprise strategies for ongoing oversharing protection when employees interact with AI systems.

- Enterprise-Grade Logging, Visibility, and Investigation Context: Opsin provides detailed logging and contextual visibility into AI activity across enterprise systems. Security teams can review prompts, responses, and data access patterns to investigate incidents and understand how AI systems behave in production environments.

- Integration With Existing Security and Governance Workflows: The platform integrates with enterprise governance and security processes so AI oversight can operate alongside existing monitoring practices. Many organizations begin by performing an AI security assessment to evaluate how AI tools interact with sensitive enterprise data and applications.

Conclusion

Enterprise AI is moving fast, and the rise of autonomous agents, copilots, and LLM-driven workflows is expanding the enterprise attack surface just as quickly. Guardian agent platforms are emerging as the control layer that allows organizations to deploy these systems without losing visibility or governance. By monitoring prompts, enforcing policies, and tracking how AI interacts with enterprise data and tools, these platforms help security teams maintain oversight as AI adoption scales. For organizations investing in enterprise AI, implementing runtime supervision is quickly becoming a necessary part of responsible deployment.

FAQ

How are guardian agents different from traditional security monitoring tools?

Guardian agents actively enforce policies in real time, while traditional tools typically detect issues after they occur.

• Inspect prompts and outputs before execution to block sensitive data leaks

• Apply runtime policies to restrict unsafe tool or API usage

• Intervene inline rather than relying on alerts after exposure

• Correlate AI behavior with security context for faster response

Learn more about guardian agents in our dedicated guide.

What risks do guardian agents actually prevent in enterprise AI systems?

They prevent data leakage, prompt injection attacks, and unauthorized system access caused by unsafe AI behavior.

• Block prompts that attempt to extract confidential enterprise data

• Detect and filter malicious or adversarial instructions

• Enforce least-privilege access to APIs and internal systems

• Prevent oversharing in copilots connected to enterprise knowledge bases

See how oversharing risks emerge in real deployments.

How do guardian agents scale across multi-agent and agentic AI workflows?

They introduce orchestration guardrails that monitor and constrain interactions between multiple autonomous agents.

• Enforce workflow boundaries between collaborating agents

• Track cross-agent data flows to prevent cascading leakage

• Apply shared policies across distributed agent ecosystems

• Detect emergent behavior patterns that violate intent or governance

Explore deeper strategies for securing agentic AI systems.

What are the biggest performance and architecture trade-offs when deploying guardian agents?

The main trade-offs involve latency, system complexity, and ongoing policy tuning requirements.

• Introduce inline inspection layers that may impact response time

• Require tight integration with identity, APIs, and logging systems

• Demand continuous tuning to reduce false positives and alert fatigue

• Add operational overhead for policy lifecycle management

Learn how security-first architectures balance speed and control in AI deployments.

How does Opsin provide visibility into AI agent behavior at runtime?

Opsin captures and analyzes prompts, responses, and data access patterns across enterprise AI systems in real time.

• Build a full inventory of copilots, agents, and GenAI applications

• Trace how AI interacts with sensitive enterprise data sources

• Surface oversharing risks and unsafe prompt patterns

• Provide investigation-ready logs with full interaction context

Start with an AI exposure baseline using Opsin’s readiness assessment