What Are Guardian Agents? The Complete Guide to AI Agent Oversight

Key Takeaways

What Are Guardian Agents in AI?

As enterprises deploy AI agents to automate tasks, retrieve data, and interact with business systems, a critical question emerges: who watches the agents? Guardian Agents are specialized oversight mechanisms that supervise, validate, and control the actions of other AI agents in real time.

Unlike traditional security controls focused on perimeter defenses or user-level access, Guardian Agents operate at the agent level. They inspect what an AI agent is doing, evaluate whether that action aligns with organizational policies, and decide whether to allow, modify, or block it.

For instance, they intervene before a Copilot Studio agent queries a restricted folder or a custom GPT calls an unauthorized API.

Guardian Agents vs AI Governance, Supervision, and Observability

Guardian agents are often confused with broader governance or monitoring concepts. The table below clarifies the distinctions:

Why Guardian Agents Are Critical for Enterprise AI Risk Management

As AI agents take on increasingly autonomous roles across the enterprise, the risks they introduce demand a dedicated layer of oversight. Guardian Agents provide that layer, acting as real-time enforcers that monitor, intercept, and govern agent behavior at scale.

The Rise of Autonomous and Multi-Agent Systems

Enterprises increasingly deploy agents that operate with minimal human intervention. Copilot Studio agents, custom GPTs, Gemini Gems, and autonomous workflows chain actions across systems, making decisions independently. Without dedicated oversight, these agents inherit broad permissions and operate outside central security visibility.

Expanding AI Attack Surfaces Across Tools, APIs, and Data Stores

Every tool, API, and data store an agent connects to is a potential exposure point. As agents integrate with SharePoint, Google Workspace, CRM systems, and third-party services, the attack surface grows exponentially.

Enterprise Data Exposure and Privilege Escalation Risks

AI agents can surface, summarize, and redistribute overshared enterprise data. When agents access files and repositories with over-permissive sharing or legacy access controls, they turn passive data exposure into active propagation across users, teams, and workflows.

Regulatory and Legal Accountability Pressures

Frameworks like the EU AI Act, NIST AI RMF, and ISO/IEC 42001 increasingly expect organizations to demonstrate control over autonomous AI systems. Guardian agents provide the enforcement and audit trail necessary to satisfy these requirements.

Financial and Reputational Impact of Agent Failures

Uncontrolled agent actions can result in data breaches, compliance violations, and operational disruptions. Guardian agents reduce this risk by intervening before harmful actions are completed.

Types of Guardian Agents in Enterprise AI Environments

Guardian Agents take several distinct forms, each designed to address a specific category of risk in enterprise AI deployments.

- Policy-Based Guardian Agents: These agents evaluate every action against a predefined ruleset, specifying which data classifications an agent can access, which APIs are permitted, or what outputs are allowed. Violations trigger blocking or routing for review.

- Behavior-Based Monitoring Agents: Rather than enforcing static rules, behavior-based guardians learn normal activity patterns and flag deviations. If an agent that typically queries marketing data suddenly accesses HR records, the guardian raises an alert.

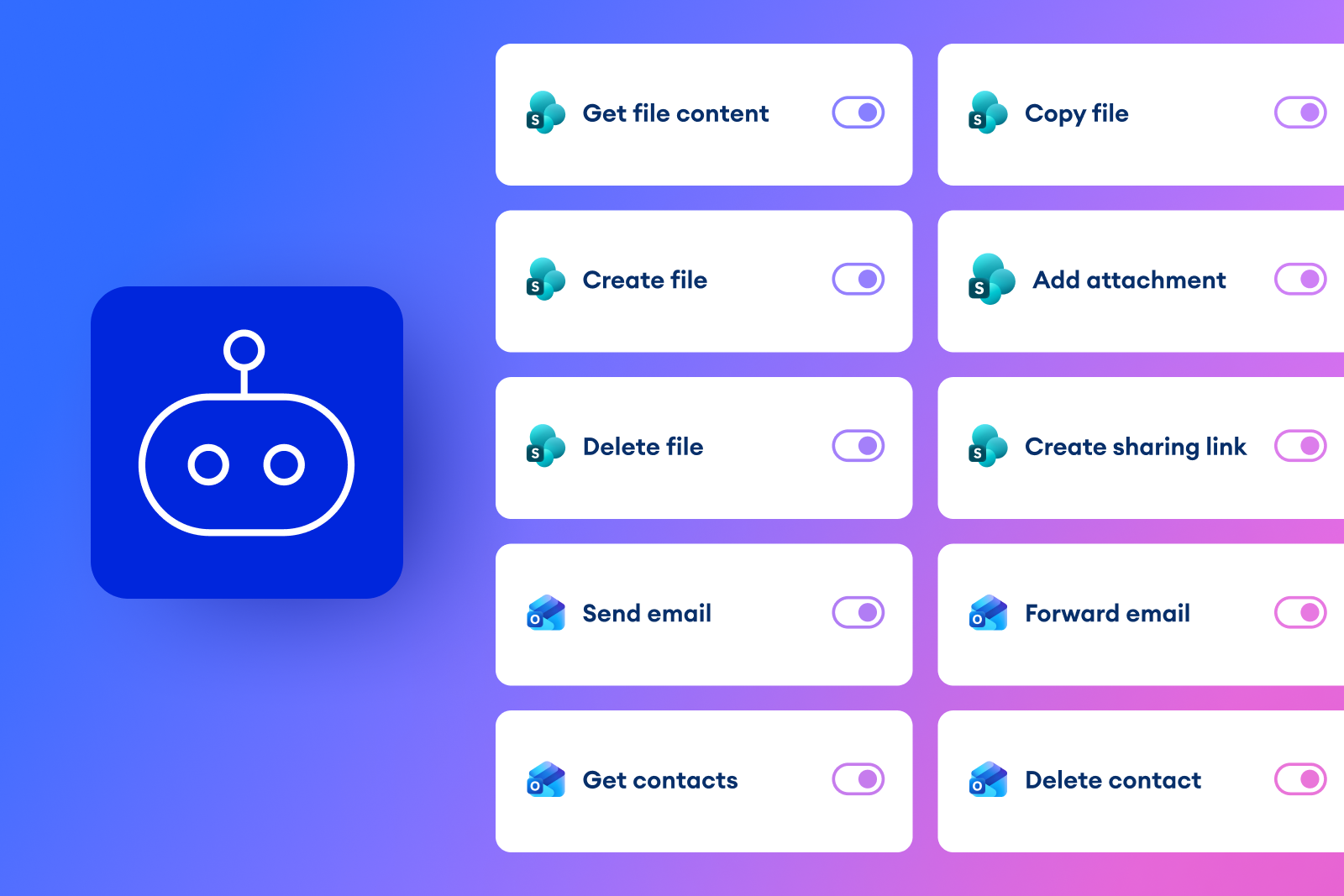

- Tool and API Access Control Agents: These guardians enforce least-privilege access by restricting which connectors, endpoints, and external services each agent is authorized to use.

- Real-Time Action Validation Agents: Operating inline with agent execution, these guardians inspect each action at the moment it occurs, validating parameters, verifying data sensitivity, and confirming authorization.

- Inter-Agent Risk Containment Mechanisms: In multi-agent environments, these mechanisms monitor interactions between agents, preventing one agent from delegating tasks to another in ways that escalate privileges or bypass security boundaries.

- Hybrid Oversight Architectures: Most enterprise deployments combine multiple guardian types. A hybrid approach might pair policy-based enforcement for data access with behavior-based monitoring for anomaly detection, providing defense in depth.

How Guardian Agents Work in Practice

Guardian Agents operate across a continuous lifecycle, from discovering what agents exist in the environment to logging every action they take.

Guardian Agents Across the AI Agent Lifecycle

Effective oversight isn’t a one-off exercise. It spans every stage of an agent's existence, from initial development through decommissioning.

Oversight During Development and Testing

Guardian controls should be embedded early. During design and testing, teams should validate that agents request only minimum permissions and that data access scopes are correctly defined.

Runtime Monitoring in Production

Once deployed, agents require continuous monitoring. Guardian agents inspect live actions, compare behavior against baselines, and enforce policies in real time, including detecting privilege escalation.

Change Management and Agent Version Control

When agents are updated or reconfigured, their risk profile can change. Guardian systems should track version changes, re-evaluate permissions, and flag any new tool or data connections introduced during updates.

Agent Decommissioning and Access Revocation

Retiring an agent requires more than turning it off. Guardian oversight ensures all associated permissions, API keys, and data connections are revoked and logged for compliance.

Challenges and Tradeoffs in Implementing Guardian Agents

Guardian Agents introduce significant protective value, but deploying them effectively is not devoid of challenges.

- Integration Complexity Across Enterprise Systems: Enterprises operate diverse technology stacks spanning Microsoft 365, Google Workspace, custom applications, and third-party SaaS platforms. Guardian agents must integrate across all of these to maintain consistent oversight.

- Ongoing Policy Tuning and Maintenance: Agent behaviors evolve as business processes change. Continuous policy tuning is necessary to avoid both false positives that slow operations and false negatives that miss genuine risks.

- Performance and Latency Considerations: Inline inspection adds processing time. Effective guardian architectures minimize latency through efficient evaluation engines and risk-tiered inspection, where low-risk actions pass quickly while high-risk actions receive deeper scrutiny.

- Lack of Standardized Agent Security Frameworks: Unlike identity management or network security, there are no universally adopted standards for guardian agent operations yet. Enterprises often need to define their own frameworks, drawing from emerging guidance like NIST AI RMF and ISO/IEC 42001.

Best Practices for Deploying Guardian Agents in the Enterprise

Effective guardian agent deployment requires clear policies, defined ownership, and ongoing governance. The following practices provide a foundation for building a strategy that is operationally sound and audit-ready.

Real-World Use Cases of Guardian Agents

- AI Agents in Enterprise Automation: Organizations deploying Copilot Studio agents, custom GPTs, or Gemini Gems need guardian oversight to ensure these agents do not access restricted files or surface overshared data. A procurement agent, for example, must be constrained from accessing payroll or HR systems.

- Autonomous Financial Systems: In financial services, agents may process transactions, generate reports, or interact with trading platforms. Guardian agents ensure these systems operate within approved parameters and that every action is logged for regulatory audit under SOX or PCI DSS.

- Multi-Agent Systems: When multiple agents collaborate on complex tasks, guardian mechanisms monitor the entire chain, preventing one agent from granting another access it should not have and ensuring data flows between agents respect classification boundaries.

Measuring the Effectiveness of Guardian Agent Oversight

Governance without measurement is incomplete. The following metrics give organizations concrete visibility into whether their guardian agent controls are actually working.

Reduction in Unauthorized or High-Risk Agent Actions

Track the volume of blocked or modified agent actions over time. A declining trend in attempted violations indicates that agents are being configured more carefully and that policies are effectively preventing unauthorized behavior.

Policy Violation Detection and Enforcement Rates

Measure the percentage of violations detected and enforced versus those missed. High rates confirm that guardian controls are operating as intended.

Mean Time to Detection and Containment

Calculate how quickly guardian agents identify a violation and contain its impact. Lower mean times reduce the exposure window for high-risk events.

Audit Readiness and Compliance Coverage

Evaluate the completeness of audit logs and the percentage of agent actions captured and documented. Strong coverage ensures the organization can satisfy regulatory audits.

How Opsin Delivers Enterprise-Grade Guardian Agent Oversight

Opsin provides the visibility, enforcement, and audit capabilities enterprises need to govern AI agents at scale.

- Automated Discovery of Enterprise AI Agents and Tool Access: Through Agent Defense, Opsin continuously scans the enterprise environment to discover all active AI agents, including Copilot Studio agents, custom GPTs, and Gemini Gems, mapping each agent's permissions, data connections, and tool access.

- Real-Time Monitoring and Inspection of Agent Actions: Opsin monitors prompts, responses, and data flows across AI tools like Microsoft 365 Copilot, ChatGPT Enterprise, and Gemini, detecting suspicious behavior and policy violations as they happen.

- Policy Enforcement and Guided Remediation: Opsin applies the organization’s AI policies to its environment, detecting violations and providing evidence and step-by-step remediation guidance so security, IT, and data owners can take appropriate action.

- Data Exposure Discovery and Oversharing Remediation: Opsin uncovers overshared or exposed data across platforms like SharePoint, OneDrive, and Google Workspace, enabling organizations to reduce agent access to only what is necessary.

- Centralized Visibility Across Multi-Agent and Multi-Environment Systems: Opsin provides a single view of AI agent activity spanning multiple GenAI platforms and collaboration tools, eliminating the need to toggle between disconnected dashboards.

- Audit-Ready Logging and Compliance Reporting: Every monitored action and enforcement event is logged. Opsin generates compliance-ready reports aligned with the EU AI Act, NIST AI RMF, and ISO/IEC 42001.

Conclusion

As AI agents become embedded in enterprise operations, dedicated oversight is no longer optional. Guardian agents bridge the gap between governance policy and actual control, monitoring actions in real time, enforcing policies inline, and maintaining audit trails. Opsin delivers this through automated discovery, continuous monitoring, inline enforcement, and audit-ready reporting.

FAQ

How are Guardian Agents different from traditional security tools like firewalls or identity controls?

Guardian agents secure AI behavior itself, not just users or network access.

• Inspect actions taken by AI agents (queries, API calls, tool usage) in real time.

• Evaluate each action against enterprise policies such as data classification or tool permissions.

• Block, modify, or escalate risky agent actions before they execute.

• Provide continuous oversight even when agents operate autonomously without human interaction.

Learn how Opsin provides enterprise-grade AI agent oversight through its platform capabilities.

Why can AI agents expose sensitive data even when existing access controls are in place?

Because AI agents can aggregate, interpret, and redistribute data across systems faster than traditional controls anticipate.

• Agents can surface overshared documents from platforms like SharePoint, OneDrive, or Google Workspace.

• Retrieval-augmented generation (RAG) may combine multiple data sources and reveal sensitive insights.

• Agents can call APIs or connectors that expose data outside the expected workflow.

• Sensitive information can propagate through summaries, reports, or responses generated by the agent.

See how enterprises reduce oversharing risk in AI deployments with Opsin’s ongoing protection approach.

How do Guardian Agents manage security in multi-agent environments?

They monitor agent-to-agent interactions to prevent privilege escalation and uncontrolled task delegation.

• Track when one agent invokes another or shares context across workflows.

• Validate that delegated tasks remain within each agent’s permission scope.

• Detect attempts to bypass security controls through chained workflows.

• Apply risk scoring across the full task chain rather than evaluating actions in isolation.

Explore how organizations secure complex agent ecosystems and enterprise AI deployments.

What architectural patterns help Guardian Agents enforce policies without slowing AI workflows?

Most enterprise deployments use risk-tiered enforcement architectures that balance real-time inspection with performance.

• Apply lightweight checks for routine actions involving low-sensitivity data.

• Trigger deeper inspection when agents access regulated or confidential datasets.

• Cache policy evaluations for frequently repeated tasks to reduce latency.

• Stream action validation during execution rather than after completion.

Learn how organizations can secure Microsoft Copilot while maintaining productivity.

How does Opsin discover AI agents that security teams might not even know exist?

Opsin continuously scans enterprise environments to identify deployed agents, their permissions, and their connected tools.

• Detects agents created across platforms like Copilot Studio, ChatGPT Enterprise, and Gemini.

• Maps each agent’s access to data sources, APIs, and enterprise applications.

• Identifies shadow agents operating outside centralized governance processes.

• Maintains a continuously updated inventory of AI agents and their risk exposure.

Learn more about Opsin’s AI detection and monitoring capabilities.