Enterprise teams have spent years defining who can access what. They built roles, policies, and audit trails around human actors. Then they deployed AI agents. The problem? Most of those controls don’t apply, which is why organizations now need AI guardian agents.

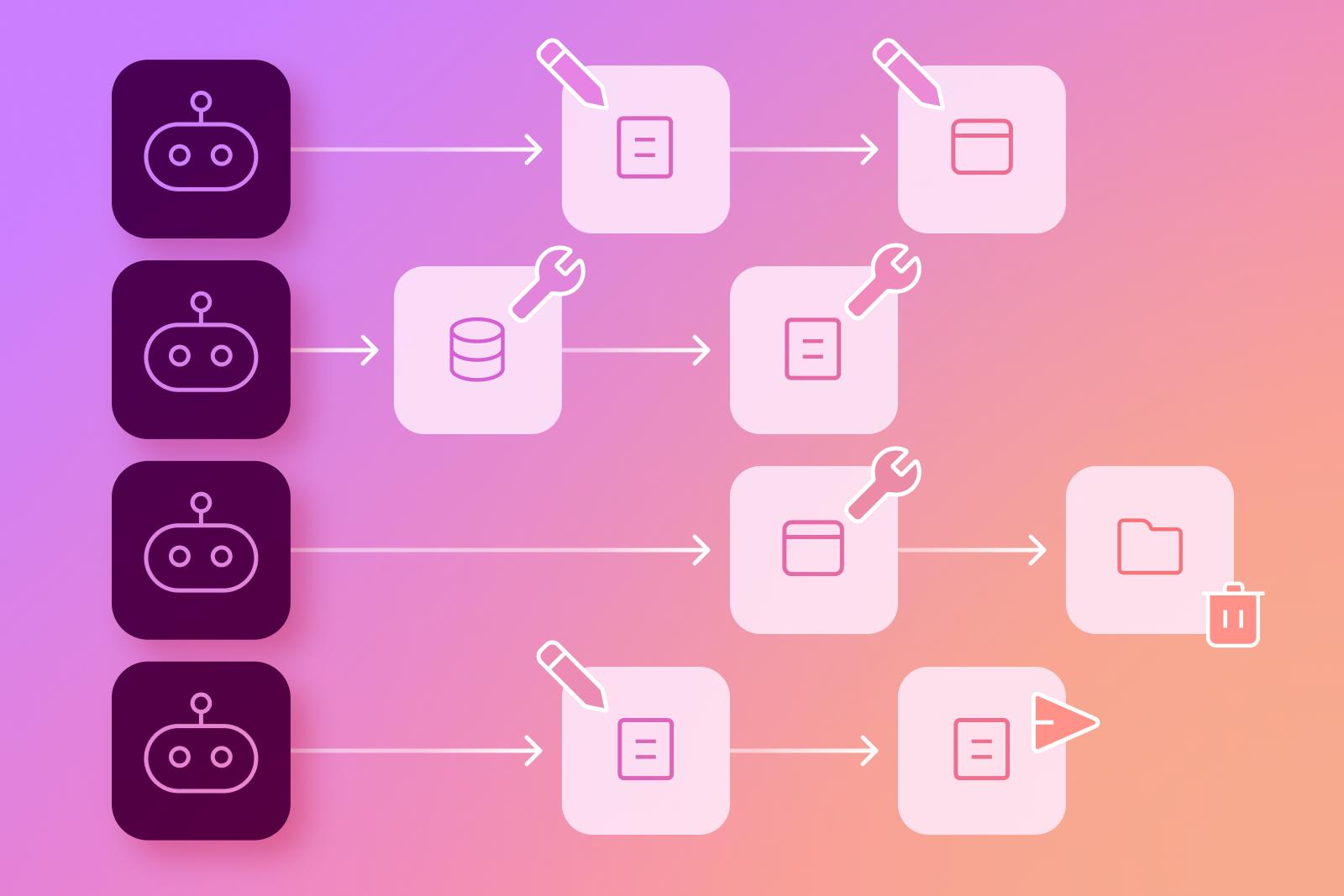

An AI guardian agent is a security and oversight layer that sits between autonomous AI agents and the enterprise resources they interact with. Unlike traditional access controls that grant or deny entry at the perimeter, a guardian layer continuously evaluates agent behavior at runtime.

It validates actions before they execute, enforces organizational policies in real time, and ensures every agent operates within defined boundaries of data access, tool usage, and decision authority. Without this layer, AI agents operate in an oversight vacuum, inheriting whatever permissions they can reach and acting on them without independent verification.

Traditional enterprise security was built around human users interacting with applications through predictable interfaces. AI agents break this model by introducing autonomous (and sometimes unpredictable) actors that operate at machine speed across interconnected systems.

Without a guardian layer, the risks extend well beyond conventional cybersecurity threats. The following risks highlight why unguarded AI agents represent a distinct category of enterprise exposure.

Most security teams have no mechanism in place to intervene between an agent’s decision and its execution. An agent with access to SharePoint, OneDrive, or Google Workspace can surface, summarize, and redistribute sensitive content before anyone recognizes the exposure.

Each tool or API an agent connects to adds a new vector for data exposure. Over-permissive integrations and inherited credentials can allow agents to reach data far beyond their intended scope, especially in environments where legacy permissions have accumulated over the years.

In multi-agent environments, one agent may hand off context, credentials, or intermediate results to another without logging or policy evaluation. These hidden exchanges can propagate sensitive data across agent chains, creating exposure that is difficult to detect or contain.

AI agents generate high volumes of operations across multiple systems in rapid succession. Without purpose-built traceability, organizations cannot determine which agent accessed what data, when, and why, making incident response and compliance reporting unreliable.

Unguarded AI agents that access regulated data without documented oversight expose the enterprise to compliance penalties and reputational harm. The absence of a guardian layer signals a governance gap that auditors and regulators are likely to scrutinize.

Effective guardianship requires controls that address the unique risks autonomous agents introduce. The following table outlines the essential controls, what each involves, and the enterprise benefit it delivers:

A guardian layer is only effective when it operates continuously across the full scope of agent activity. The following steps describe how guardian layers reduce runtime risk.

The placement of the guardian layer within the enterprise AI architecture determines its effectiveness. Guardian layers are most effective when positioned in the following:

The most critical placement is between the AI agent and the tools it interacts with. This allows the guardian to intercept every tool call, API request, and data query before execution, ensuring no action bypasses policy evaluation.

In multi-agent deployments, placing guardian controls at the orchestration boundary enforces policies on task delegation, context sharing, and credential inheritance before work is distributed across agents.

The guardian must connect with enterprise identity and access management, security information and event management, and compliance platforms. This ensures agent governance reflects the organization’s broader security posture.

For organizations running agents across Microsoft Copilot, ChatGPT Enterprise, and Google Gemini, the guardian must provide cross-environment visibility to consistently monitor behavior regardless of the underlying platform.

Building a guardian layer that meets enterprise demands requires specific architectural capabilities. The following requirements define what organizations should expect.

The following best practices provide a roadmap for bringing AI agent activity under effective governance:

As AI agents expand across enterprise environments, organizations need a platform that delivers the visibility, control, and enforcement required to govern autonomous AI at scale. Opsin addresses these challenges through a comprehensive approach to AI agent guardianship.

AI agents are transforming enterprise productivity, but they are also introducing risks that traditional security controls were never designed to manage. Autonomous agents that inherit broad permissions, interact with sensitive files and repositories, and operate across interconnected tools demand a new kind of oversight.

The guardian layer is the answer. By placing continuous, context-aware governance between AI agents and enterprise resources, organizations can maintain control without sacrificing the efficiency that agents deliver.

Platforms like Opsin demonstrate how this works: discovering agents, monitoring behavior, enforcing policies, and delivering the visibility that security and compliance teams need to govern AI responsibly.

AI agents act autonomously across multiple systems, which means they can combine permissions, data, and tools in ways traditional scripted automation typically cannot.

• Map every agent’s connected tools, APIs, and data sources before deployment.

• Apply least-privilege access rather than inheriting the deploying user’s permissions.

• Log every agent action (prompt, tool call, data query) to maintain traceability.

• Introduce runtime checks that validate agent actions before execution.

Learn how enterprises evaluate AI exposure using an AI readiness assessment.

Because AI agents can autonomously decide which tools to use and what data to retrieve, static role-based permissions cannot predict or control every action they take.

• Shift from static access policies to runtime policy enforcement.

• Evaluate each agent action based on context (data sensitivity, intent, and scope).

• Monitor agent prompts and outputs for data exposure patterns.

• Implement automated escalation for high-risk actions involving sensitive data.

Multi-agent systems can create emergent behaviors where agents share context, credentials, or intermediate data, potentially amplifying exposure across systems.

• Monitor agent-to-agent context passing and enforce policy checks on shared outputs.

• Implement identity scoping so each agent operates with its own limited credentials.

• Track multi-agent workflow graphs to identify unexpected delegation chains.

• Flag unusual data aggregation patterns across agents and tools.

Learn more about agent-based architectures and enterprise risk.

The most effective pattern inserts a policy-enforcement layer between agents and the tools or APIs they invoke.

• Intercept every tool call and API request through a policy evaluation service.

• Use event-driven monitoring pipelines to evaluate actions without latency.

• Maintain centralized policy orchestration across all agent platforms.

• Feed telemetry into SIEM and compliance systems for unified monitoring.

Opsin automatically discovers AI agents and maps their data connections, permissions, and activity to reveal hidden exposure risks.

• Identify Copilot agents, Custom GPTs, and Gemini integrations across environments.

• Map inherited permissions and flag over-privileged agents.

• Prioritize risk based on sensitive data access and business impact.

• Provide centralized visibility across Microsoft 365, Google Workspace, and GenAI tools.

Enterprise teams have spent years defining who can access what. They built roles, policies, and audit trails around human actors. Then they deployed AI agents. The problem? Most of those controls don’t apply, which is why organizations now need AI guardian agents.

An AI guardian agent is a security and oversight layer that sits between autonomous AI agents and the enterprise resources they interact with. Unlike traditional access controls that grant or deny entry at the perimeter, a guardian layer continuously evaluates agent behavior at runtime.

It validates actions before they execute, enforces organizational policies in real time, and ensures every agent operates within defined boundaries of data access, tool usage, and decision authority. Without this layer, AI agents operate in an oversight vacuum, inheriting whatever permissions they can reach and acting on them without independent verification.

Traditional enterprise security was built around human users interacting with applications through predictable interfaces. AI agents break this model by introducing autonomous (and sometimes unpredictable) actors that operate at machine speed across interconnected systems.

Without a guardian layer, the risks extend well beyond conventional cybersecurity threats. The following risks highlight why unguarded AI agents represent a distinct category of enterprise exposure.

Most security teams have no mechanism in place to intervene between an agent’s decision and its execution. An agent with access to SharePoint, OneDrive, or Google Workspace can surface, summarize, and redistribute sensitive content before anyone recognizes the exposure.

Each tool or API an agent connects to adds a new vector for data exposure. Over-permissive integrations and inherited credentials can allow agents to reach data far beyond their intended scope, especially in environments where legacy permissions have accumulated over the years.

In multi-agent environments, one agent may hand off context, credentials, or intermediate results to another without logging or policy evaluation. These hidden exchanges can propagate sensitive data across agent chains, creating exposure that is difficult to detect or contain.

AI agents generate high volumes of operations across multiple systems in rapid succession. Without purpose-built traceability, organizations cannot determine which agent accessed what data, when, and why, making incident response and compliance reporting unreliable.

Unguarded AI agents that access regulated data without documented oversight expose the enterprise to compliance penalties and reputational harm. The absence of a guardian layer signals a governance gap that auditors and regulators are likely to scrutinize.

Effective guardianship requires controls that address the unique risks autonomous agents introduce. The following table outlines the essential controls, what each involves, and the enterprise benefit it delivers:

A guardian layer is only effective when it operates continuously across the full scope of agent activity. The following steps describe how guardian layers reduce runtime risk.

The placement of the guardian layer within the enterprise AI architecture determines its effectiveness. Guardian layers are most effective when positioned in the following:

The most critical placement is between the AI agent and the tools it interacts with. This allows the guardian to intercept every tool call, API request, and data query before execution, ensuring no action bypasses policy evaluation.

In multi-agent deployments, placing guardian controls at the orchestration boundary enforces policies on task delegation, context sharing, and credential inheritance before work is distributed across agents.

The guardian must connect with enterprise identity and access management, security information and event management, and compliance platforms. This ensures agent governance reflects the organization’s broader security posture.

For organizations running agents across Microsoft Copilot, ChatGPT Enterprise, and Google Gemini, the guardian must provide cross-environment visibility to consistently monitor behavior regardless of the underlying platform.

Building a guardian layer that meets enterprise demands requires specific architectural capabilities. The following requirements define what organizations should expect.

The following best practices provide a roadmap for bringing AI agent activity under effective governance:

As AI agents expand across enterprise environments, organizations need a platform that delivers the visibility, control, and enforcement required to govern autonomous AI at scale. Opsin addresses these challenges through a comprehensive approach to AI agent guardianship.

AI agents are transforming enterprise productivity, but they are also introducing risks that traditional security controls were never designed to manage. Autonomous agents that inherit broad permissions, interact with sensitive files and repositories, and operate across interconnected tools demand a new kind of oversight.

The guardian layer is the answer. By placing continuous, context-aware governance between AI agents and enterprise resources, organizations can maintain control without sacrificing the efficiency that agents deliver.

Platforms like Opsin demonstrate how this works: discovering agents, monitoring behavior, enforcing policies, and delivering the visibility that security and compliance teams need to govern AI responsibly.